Posted inWeb Development

Beyond the Black Box: Designing for Trust and Clarity in Autonomous AI Systems

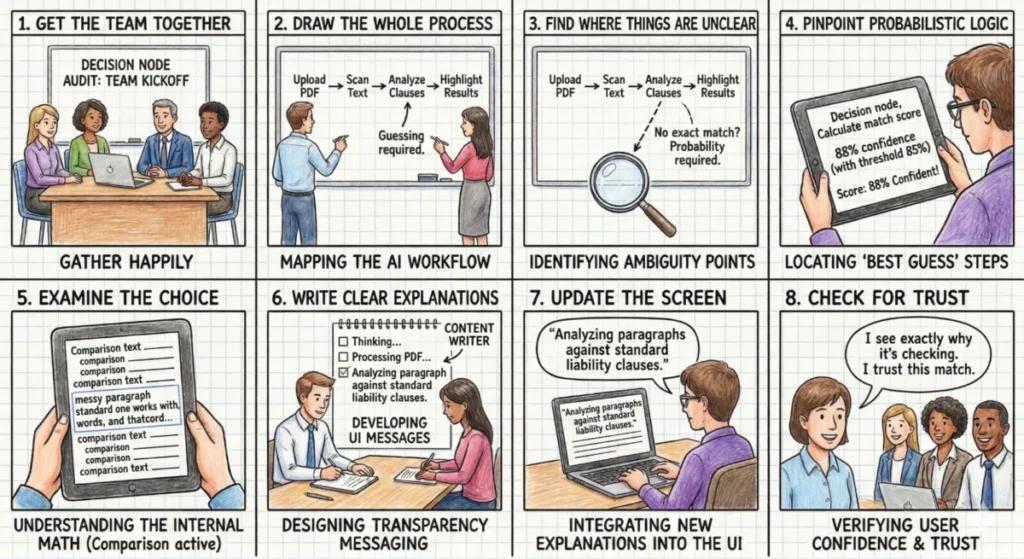

The rapid proliferation of agentic artificial intelligence (AI) systems, designed to perform complex tasks autonomously, has introduced a critical challenge for developers and users alike: maintaining transparency and fostering trust.…