Posted inSEO and Search Marketing

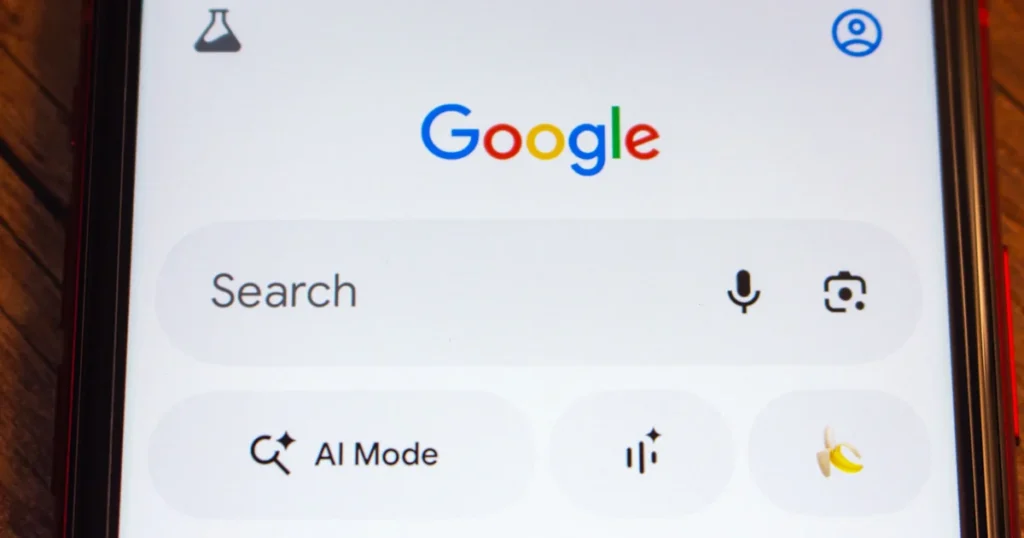

Google AI Mode in Chrome Gets Side-by-side Browsing

The integration of artificial intelligence directly into the web browsing experience has reached a new milestone as Google announces a significant update to AI Mode within its Chrome desktop browser.…