Posted inWeb Development

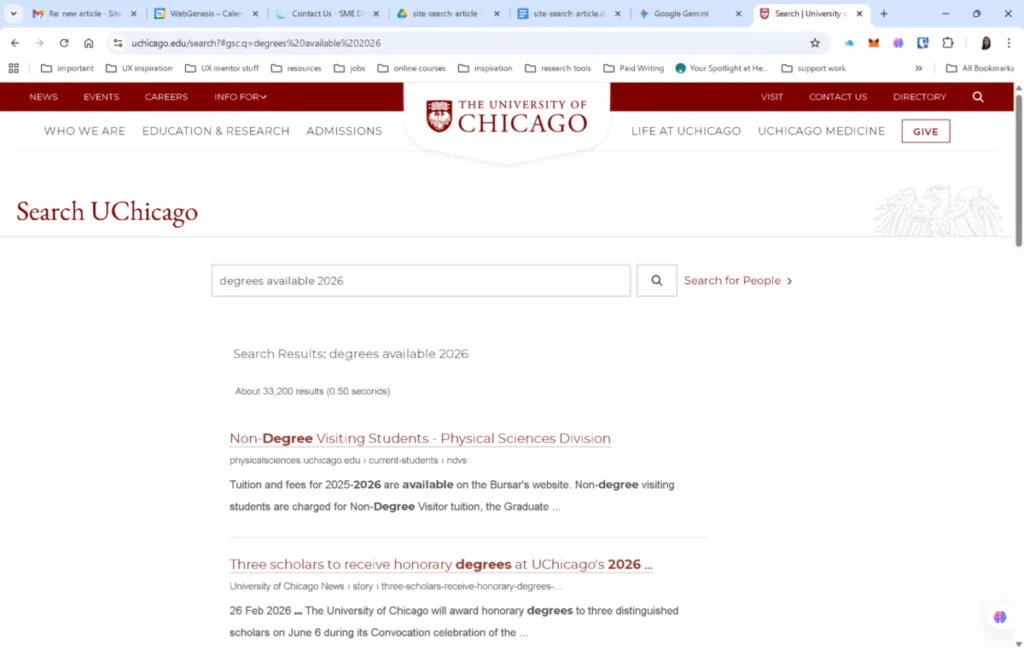

The Site-Search Paradox: Why Google Still Wins Over Internal Site Search

Modern user experience (UX) is increasingly defined not by the sheer volume of content a website offers, but by the ease with which users can locate specific information within it.…