Posted inWeb Development

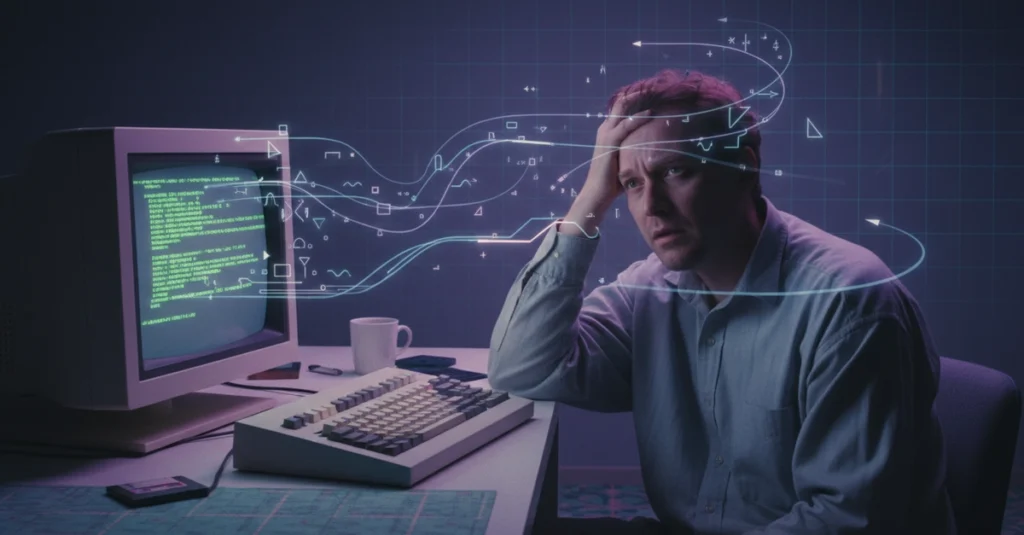

The Unseen Hurdles: Why 90% of Aspiring Developers Discontinue Their Journey Within Six Months and the Strategies Employed by the Resilient 10%.

The journey into software development, often perceived as a straightforward path to innovation and lucrative careers, presents a formidable psychological and intellectual challenge that leads a significant majority of beginners…