Posted inWeb Development

The Evolving Landscape of Local-First Web Application Development in 2026

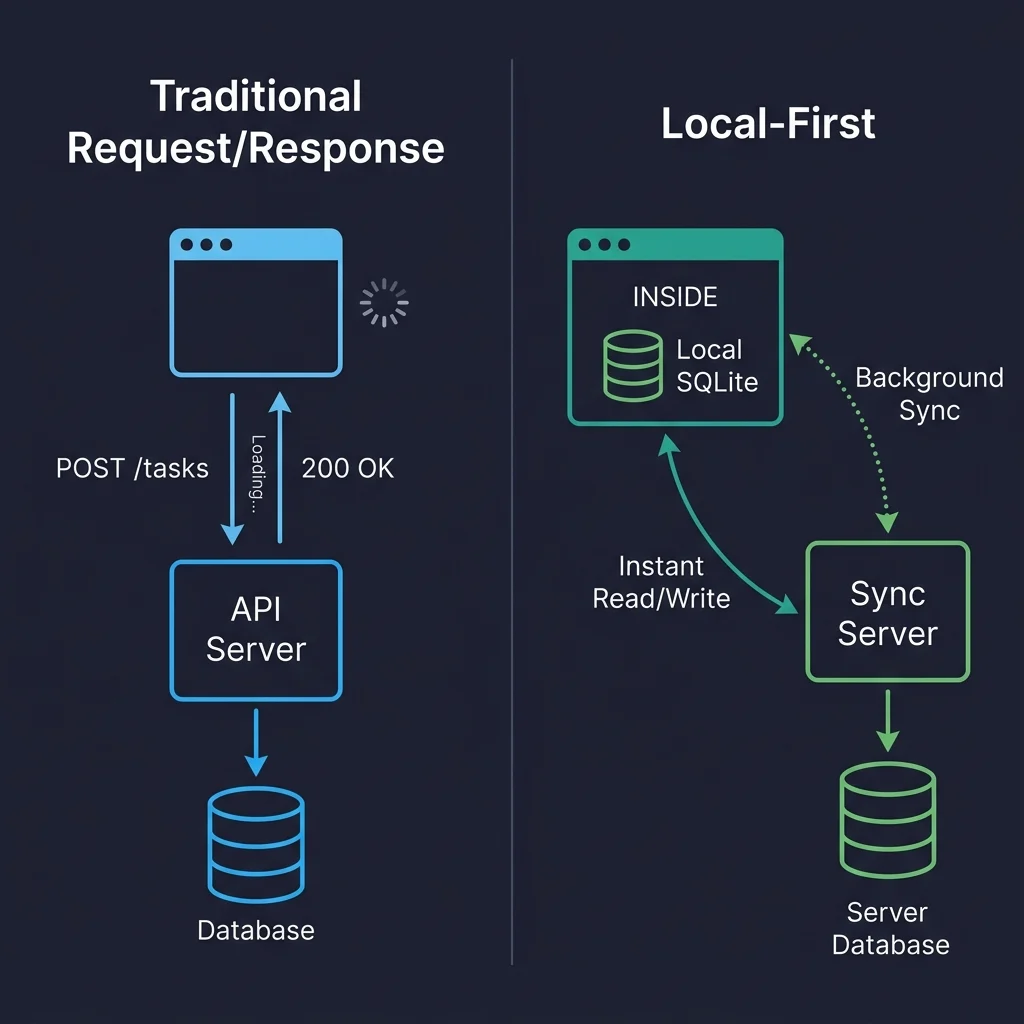

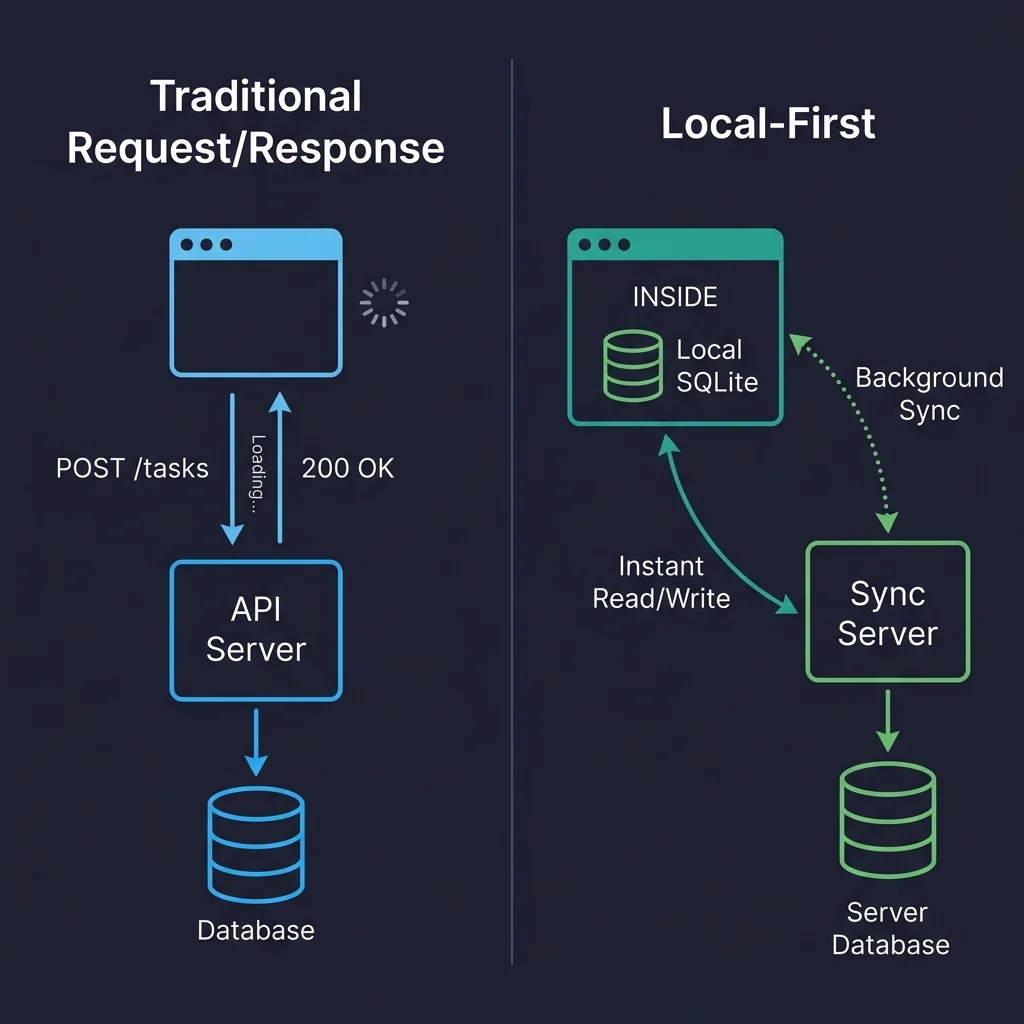

Recent industry observations underscore the critical need for resilient web applications, particularly in environments with inconsistent internet access. A developer's experience in late 2025, attempting to demonstrate a project management…