Posted inDigital Marketing

The Transformative Role of Citations in Answer Engine Optimization: A New Era for Content Visibility

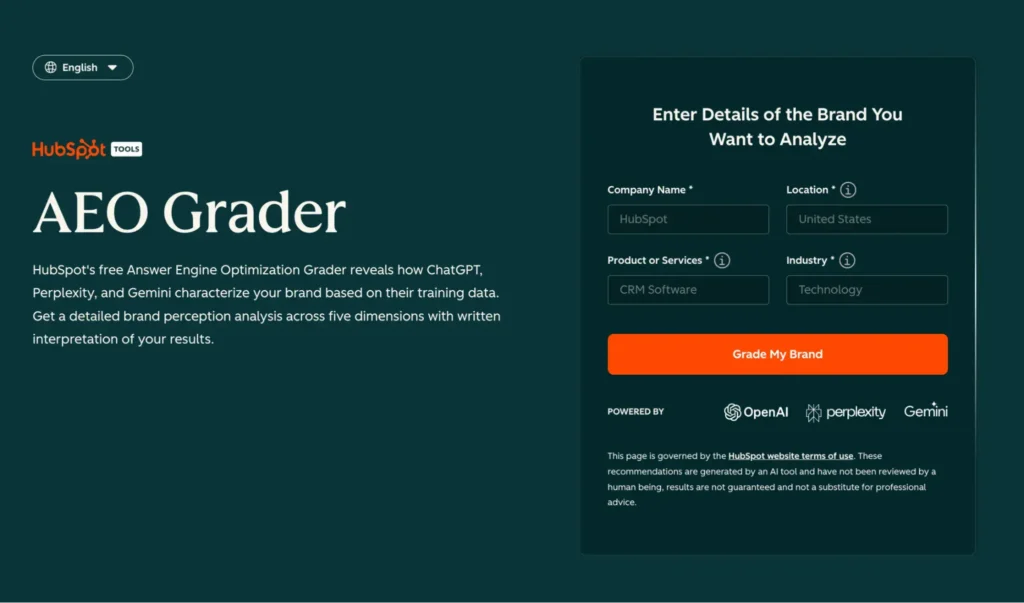

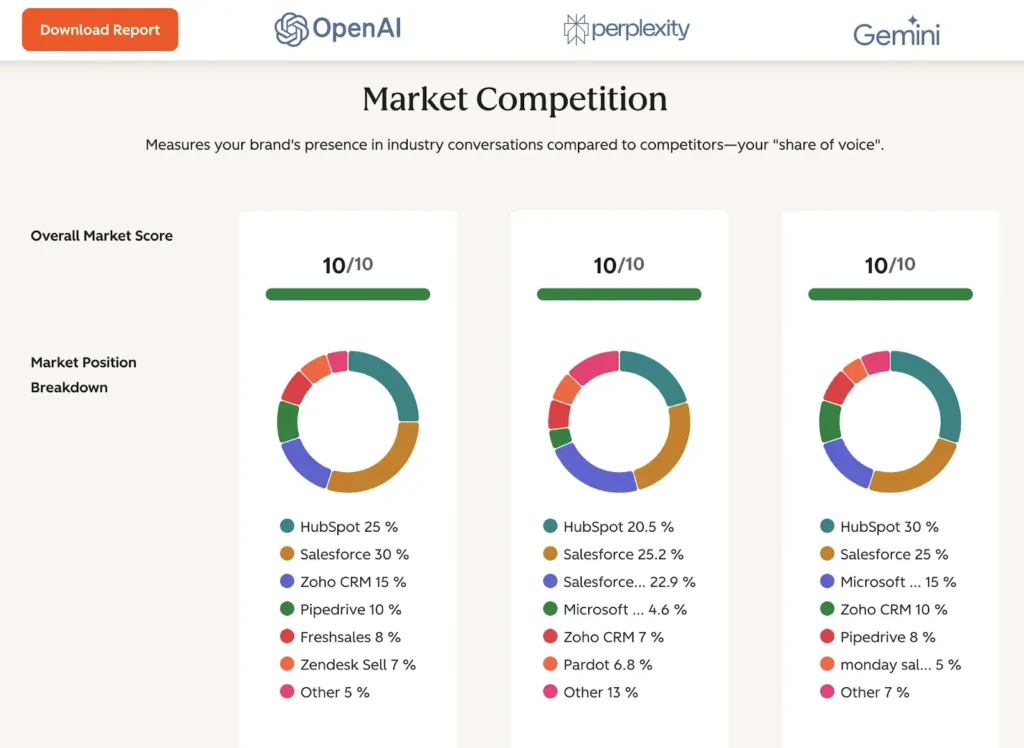

The long-established playbook for Search Engine Optimization (SEO), primarily centered on earning backlinks to climb rankings and capture clicks, is undergoing a profound transformation. As artificial intelligence (AI) rapidly reshapes…