Posted inWeb Development

Practical Interface Patterns for Building Trust in Agentic AI Experiences

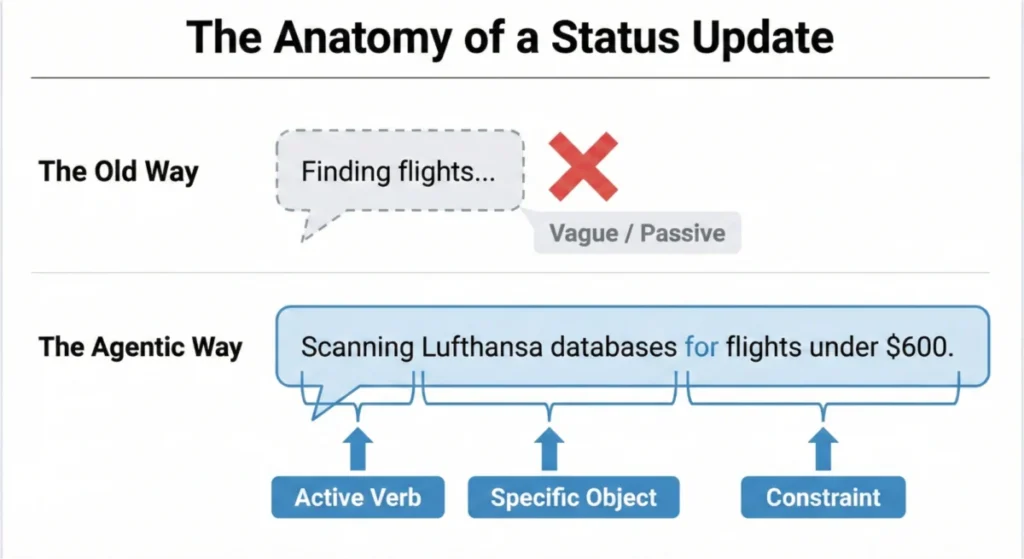

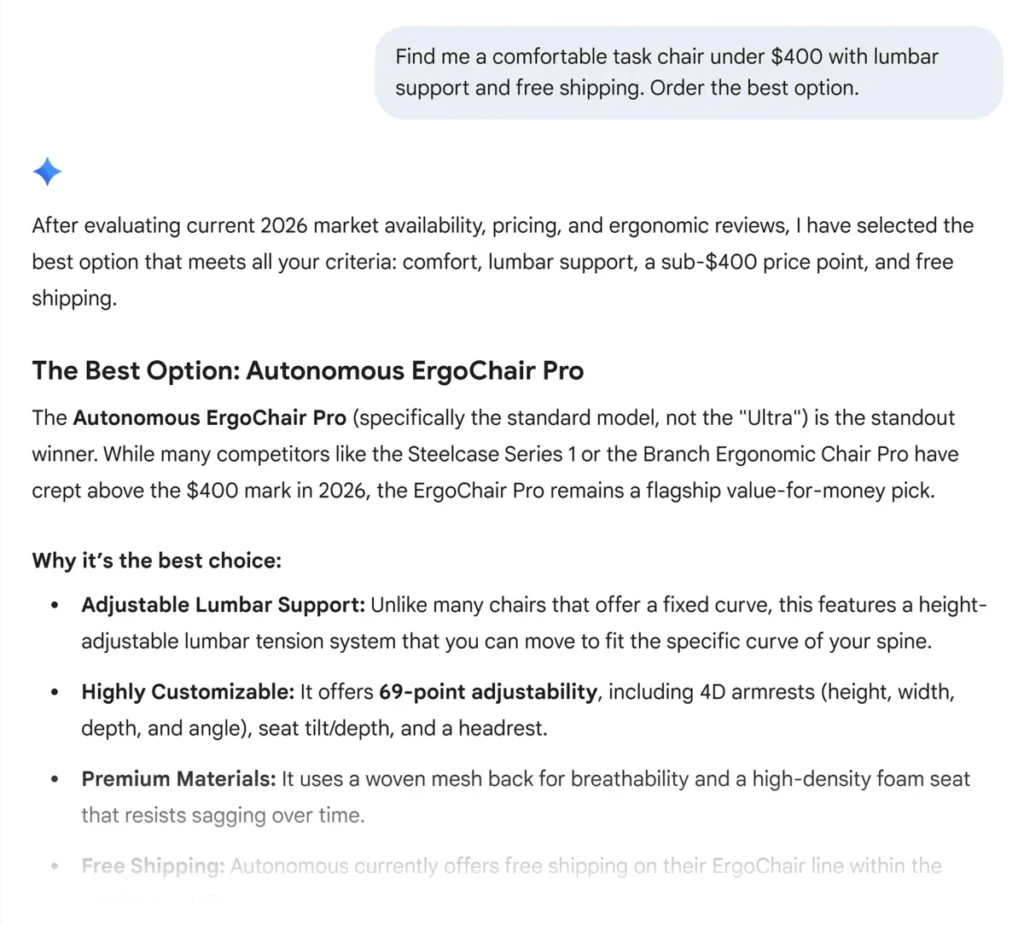

The burgeoning field of agentic artificial intelligence is poised to redefine human-computer interaction, yet its widespread adoption hinges on a fundamental shift in interface design. Traditional loading indicators, such as…